Introduction

Since I have been adding POE Security cameras, and more home automation… I felt it necessary to build a small server for handling these workloads, instead of utilizing my gaming-pc with Hyper-V.

The reason behind this build- Originally- was looking at a Synology NAS..

For reference, a DS920+ which is a quad-core CPU, 4 Drives, and 4GB Ram (up to 8).. Would run you around 559$ on Amazon.

A huge downside- if any piece of hardware on the unit fails, you are at the mercy of the vendor to replace it. Since it does not use standardized raid, you cannot simply plug your drives into another PC.

I felt, I could build a competing piece of hardware for a close, or lower price while allowing MUCH more flexibility and expansion room.

Granted, for user’s who just want to plug something in, and it work, a synology/drobo/qnap is just fine. But, for my uses, I find it is more effective to build a new one.

Specifications / Parts / Prices

Prices were captured as of when this article was published, and may have changed over the course of time.

| Price | Part | Retailer |

|---|---|---|

| $82.95 | CyberPower AVRG750U | Amazon |

| $99.99 | Samsung 970 EVO 500GB M.2 NVMe | Amazon |

| $72.99 | Gigabyte B450M DS3H | Amazon |

| $91.97 | AMD Ryzen 3 3200G 4 Core 3.6ghz base | Amazon |

| $109.99 | Fractal Design Node 804 Case | Amazon |

| $62.99 | G.SKILL Aegis 2x8GB DDR4 3000 | Amazon |

| $69.99 | Corsair CX450M 450W BRONZE PSU | Amazon |

Excluding the UPS, This brings the grand total to $507.92, which is still less then the aforementioned Synology Units while being significantly more powerful, and expandable. As a bonus- this server already has 500GB of NVMe storage, while the base-price on the synology includes NO storage.

Disclaimer- Amazon affiliate links are used in this article. For this site, I choose to not pesture my audience with annoying advertisements, and instead, only rely on affiliate links to support this hobby. By using the affiliate link, you will pay the same price on Amazon, as you would otherwise pay, however, a small percentage will be given to me.. To note- I DID buy all of the seen products with my own money, and did not receive any incentive to feature or utilize them.

Other Parts

I also had these parts laying around:

- 4x 2TB mixed-vendor SATA HDDs

- LSI 9240-8i.

To note- the LSI HBA is completely unrequired in this build, as the motherboard has 4x onboard SATA ports. If more ports are required, you can buy a cheap SATA JBOD HBA for around 35$ which will do the job just as well.

The Build

Here are all of the parts, ready to be put togather.

Everything ready to be assembled.

Top-less porn shot.

Interior of the case before adding the motherboard

After adding/mounting the motherboard.

One of the two drive caddies loaded.

The back-side of the case, with one of the two-drive caddies populated.

How does it run?

This post is being completed many months after I had put the server into place…

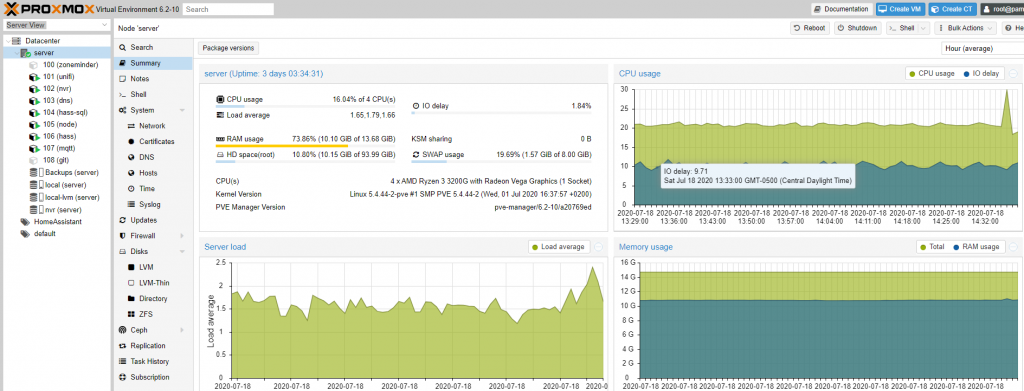

So far, running 7 containers, it is working flawlessly. Under load, the power draw is pretty minimal, and it makes no noticeable noise. If you didn’t know where to find it, you would never know it was there.

While some may have questioned my choice of a 3200G for 90$, under its current load running ZoneMinder for 4 5MP cameras, running all of my home automation, it is only at around 20% load, and has much more room to grow.

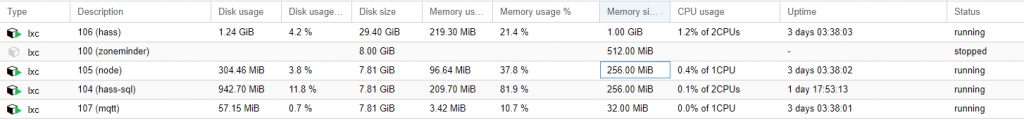

As far as the 16GB of ram, 80% of it is only used for ZFS caching. In the below image- you will notice only around half of a single GB of the memory is used for my Home Automation group.

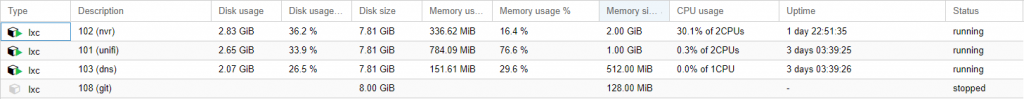

For my other group of servers, you will still notice slightly over 1GB combined ram between all of the containers.

This goes back to my earlier claim, 80% of the RAM is used for ZFS and/or File caching.

Even for hard disk usage, you will notice…. most of my containers are very small…. My MQTT server, only uses 67MB on disk.

If you would like to learn how to make incredibly small LXC containers, which next to no overhead, I would recommend checking out Alpine Linux. My Alpine containers have been absolutely amazing to manage, and deploy, while using next to no resources at all.

The ONLY issue I have had with this build so far- My LSI 9240-8i died a few weeks ago. Granted- the unit was old when I purchased it off of eBay many years ago (And then used it in my old FreeNAS/Plex server for years).

I replaced it with a LSI 9207-8i off of Amazon for 60$. After replacing it- my ZPools came back online with no issues at all, requiring no configuration whatsoever.

Notes

If you plan on doing a lot of ZFS, I would recommend 32GB of total RAM.

If you are doing compute-heavy workloads, spend 100$ more and get a Ryzen 5 3600. With 6c/12t, and a 65w tdp, it is efficient, yet, powerful.

Finally- the secret to my abnormally low RAM/Disk usage for my containers….. Create LXC containers using the alpine linux template. It is really small, and has been working extremely well.

Future Plans

Currently? None. The server does everything I need it to do, with plenty of additional capacity.

When the CPU is upgraded in my Gaming Rig / Workstation, I will be dropping the Ryzen 5 3600 6c/12t into the server.

6 Months Later

So, I came back to update the current status and progress after 6 months.

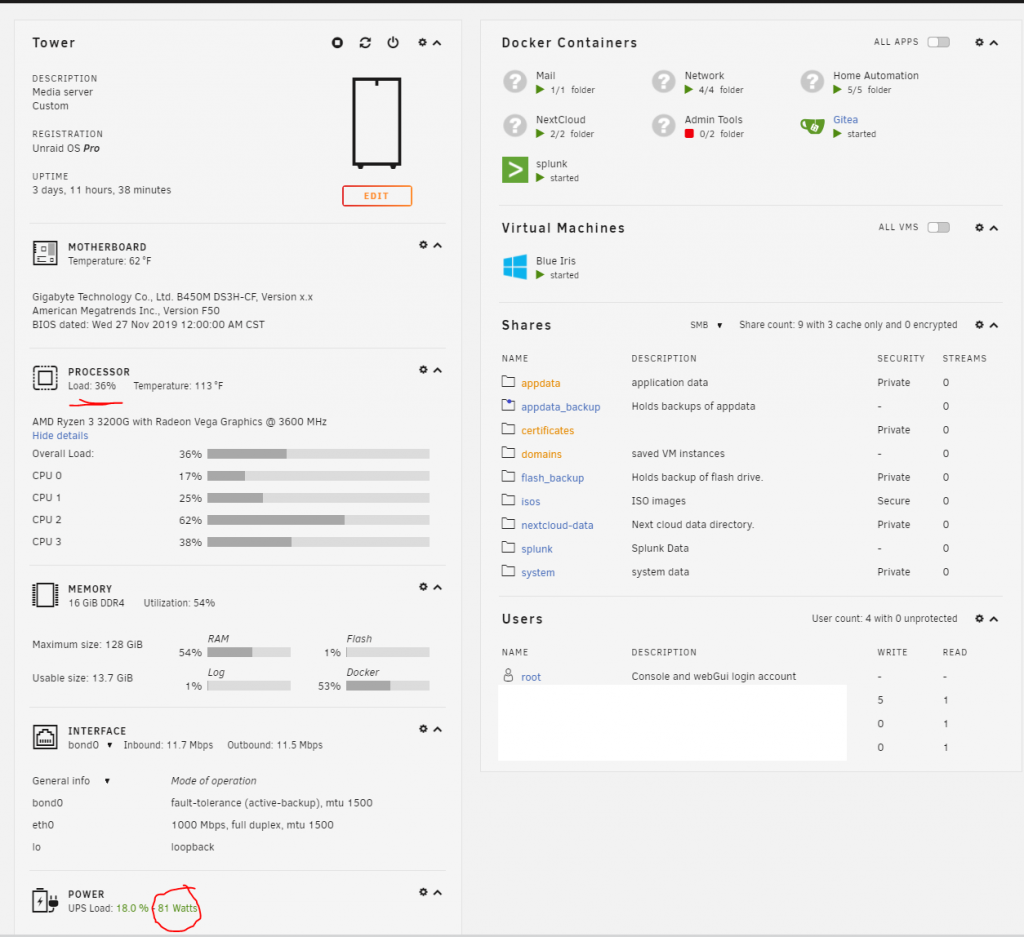

For one, I have switched everything over to Unraid. For my needs, it has been far quicker to deploy, and easier to manage. I cannot say enough good things about it.

Other then that, the hardware is still exactly the same, and trucking along….

With all of my containers and blue iris running, I am only utilizing around 30-40% average utilization of the processor, leaving plenty of free capacity.

Power draw at the UPS, is 80 watts. This includes my Unifi POE-8 switch, as well as two POE cameras.

Memory utilization is only around half, leaving plenty of free capacity.

The moral of this story- not everybody needs a server with a 2,000$ xeon, i9, or something ridiculous.

I am running a pretty decent chunk of services, from a 80$ quad core processor, without any issues at all. It makes no noise whatsoever, and draws very little overall power.

“The moral of this story- not everybody needs a server with a 2,000$ xeon, i9, or something ridiculous.

I am running a pretty decent chunk of services, from a 80$ quad core processor, without any issues at all.”

Replaced it because the CPU wasn’t beefy enough. Ironic, lol

The ironic part, is that I am in the process of moving most of my servers to a k8s cluster composed of SFF and MFF optiplex 7040s, all of which costed under 100$ each!

The r720xd still has its place, aa shoving 100T of storage into a bunch of SFF cases doesn’t generally work out very well.

But, as of this moment, the big server I have is basically only being used for bulk storage and Backups.

All of the small services are hosted on SFFs.

Oh, would you mind sharing why you sold th server?

The short story- I needed a LOT more resources then this server could offer…

Essentially, I sold this server, which funded this build: https://xtremeownage.com/2021/03/16/2021-server-and-gaming-pc-build/

Which, got replaced a few months later by a Dell R720XD, because 48G of ram wasn’t enough either.

Hi! I want to do somethink like you but with a little bit of challenge. I would like to get around 25w max when the HDDs are sleeping (not spinning). What would you recommend? Is it even achievable? Do your HDDs spin down?

I am currently running HomeAssistant supervised, around 15 addons (Nextcloud, some ..arrs, z2m, sql, and small ones), MiniDlna, some kind of NAS like backup. I have main SSD for system (RaspiOs 64bit) + two 1,5TB drives for “NAS”: The drivers are connected thru USB-Sata convertors thru USB hub to Pi. I have also Zigbee dongle connected to USB. The power draw when the two 1,5 drives not spinning is 10-14Watts

I like to move to the “server” because:

> I can reach around 30MBs when doing files tranfers over lan

> I want to use sata connectors on the MoBo (not the convertors. Thats why I do not want NuC)

> I had a crash when no backup image worked (even though previously the images worked. Probably something is burned on pi. Instalation from the scratch works. Strange.). I would like to avoid that by using Proxmox or similar on server.

> My vision is to have Home assistant + those addons on the server and OMV

Well, I don’t have specific power numbers for this build, as I sold the server sometime last year…. Documentation tells me this build was around 80w, including all of its hard disks, HBAs, etc.

Unraid was good for reducing power consumption because it would automatically spin down disks, and you could use NVMe for caching files to keep the disks from spinning up.

For using proxmox, with docker/hass/etc…. it worked perfectly.

I will note, I do have a few HP z240 SFF PCs laying around which I use as a firewall, and other purposes. I have measured them at 20w of energy while running. THey are quite cheap to acquire too via eBay. with an i5-6500 and 8G of ram, they are capable of quite a bit.

What would your updates to the build be if you were to do it again today? I see that the gigabyte mobo is no longer being sold new, I’m about the pull the trigger on this build after reading your story. Thank you for putting this all together.

Actually,

I upgraded it today. I doubled the ram, to 32gb total.

Last week, I added a new 4-port intel NIC, which allows me to specify a dedicated interface per vlan.

If I were to build this server again, I would go with a beefier processor. While the cheap quad core is trucking along just fine, it would nice to have quite a bit more power for plex.

Next month, I plan on adding 4x6tb disks, for a bit more storage

Thanks for your response. If you couldn’t get your hands on that motherboard, what would be your next choice?

A different one with more x16 pcie slots, ideally- 3.

And at least two on board nvme slots.

Reasons-

A good HBA uses a x16 slot.

A gpu, uses an x16 slot.

A 4x nvme to pcie, uses an x16 slot.

I wanted to add a gpu for encoding purposes, however, I am limited on space.

I want to add more nvme for cache redundancy… however slots are limited.

The choice I picked for this article was for cost savings, in the name of building a 100% capable, brand new server for under 500$. It is one of the sacrifices which was acceptable

In 2021 – excluding threadrippers or used server equip. I’d go with Asus X570 ACE WS. its the only board with 3-pciex16 slots running at 8x. The third one has electrical X4 from the cpu to the chipset.

If I were to rebuild today, I’d go with the Asus x570 ACE WS, put the GPU top slot, NVME middle (top and middle slots are lanes off the cpu) put the HBA on the bottom slot.

It has dual gigabit lan, realtek and intel, remote management, and supports ECC if needed.

So, its been…. 10 months since you asked this question.

My setup today is a R720XD server, dual E5-2695v2 CPUs (24c/48t), 128GB of ram, and a touch over 100 terabytes of combined storage with a couple terabytes of NVMe. As well, it spots 40GBe.

So, If I were to do any of this again, I would have purchased a R730XD, instead of an R720XD (Supports PCIe bifurcation. A tad more efficient too), and… purchased a lot more hard drives back when they were cheap.

If you are wondering how/why I moved from a quiet, efficient server, up to this monster- its mostly due to media streaming, and MANY containers and services running on my network. As well, this site you are reading right now is hosted on my server along with a few other public facing websites for customers.

Thanks for sharing this build. I also started to play with a proxmox server about a year ago with slightly more modest hardware: an AMD FX-6300 6core CPU, 12 GB RAM, and it’s running rather well. I stumbled upon this page after reading your post about unraid and I am now really interested in giving it a try. Did you encounter any issues whatsoever in transferring your vms or containers from proxmox to unraid?

Essentially- I had to rebuild all of the containers from scratch. However- with its built-in docker support, it was closer to click the template, it automatically installs, and then restore the configurations scrapped from proxmox.

I will also say- for the first week, I actually ran proxmox as a VM inside of unraid, successfully, while I migrated my containers and VMs over. I was able to mount its physical disks as a VM, without any issues.

For my NVR VM, I actually just rebuilt it from scratch, and ended up going with Blue Iris/Windows Server, instead of a LXC container running zoneminder. I have been extremely satisfied with the switch.

On this post- https://xtremeownage.com/2020/10/20/unraid-vs-proxmox-my-opinions/ I do share a bit of my experiences with unraid. Overall, it has been 98% positive. The ONLY downside, has been a small instability issue regarding USB 3.0 with unraid.

Edit-

With that said, proxmox was rock solid as well. My only complaints with it- where its handling of storage if you choose to utilize ZFS, or LVM pools.

Could you tell more about how you moved from zoneminder to blue iris abd I assume you put blue iris in a container ?

Blue Iris is installed inside of a windows VM. As of the last time I checked, the only supported method for running BI was with windows.

However, it was 100% worth moving to Blue Iris in my opinion. The reliability has been wonderful. I have virtually had no problems at all running it. With zoneminder, I would randomly log in to discover no recordings, and other random issues, without any notifications.

In my current setup, I do have a physical drive passed directly into the virtual machine, which allows Blue Iris to directly write to the physical, allocated disk.

Overall, I am much happier running Blue Iris, despite having to do it via a virtual machine.

Excellent build, I appreciate the direct links. I built one for myself two weeks ago, and it has been working fantastically!

Glad you enjoyed it!

Test Test test

Test two!