Introduction

A while back, I did a 10/40G Network Upgrade. This involved adding a new Brocade ICX6610 core switch, capable of handling line speed routing and forwarding, across all ports.

You can see the previous state of my rack with pictures and details in my Rack em Up post.

While, this switch is an absolute unstoppable monster, it comes with two drawbacks. It makes a lot of noise, and it uses a lot of energy. My calculations place its energy consumption around 200w, which is nearly what my massive server consumes.

So, the plan….. is to completely replace it, WITHOUT degradation of services.

I plan on doing this by replacing the ICX6610 completely, and moving all routing up to my firewall, where previously, my ICX6610 handled routing between various subnets.

Getting Started

New Core Switch

Sometime earlier this year, I ran out of POE ports and acquired a Zyxel GS1900-24EP 24 port gigabit POE switch. While, there is nothing fantastic about this switch, it can do vlans, and management POE devices. It does exactly what I ask of it. The key piece here, it is silent, and has low power consumption.

The downside, it has no 10G or faster uplink ports.

I decided to reinstall a old quad port gigabit NIC into my Opnsense firewall. I then, ran a 4-link lagg from the firewall to the Zyxel.

Afterwards, I moved my POE cameras, APs, Livingroom Switch (powered via poe), iDrac, and other gigabit loads to the zyxel. I pointed the vlans in opnsense from the old 10G lagg, to the new lagg connected to the zyxel.

After a bit of configuration, I had all of my security cameras, IOT devices, etc working as expected.

How to maintain 10G connectivity from my PC, to my NAS

One of the big reasons I upgraded to 10/40G this year, was to ensure I had a very fast iSCSI connection to my NAS, allowing me to use storage from my server. I use this to host my secondary steam library for less often played games, and other bulk-storage.

However, without a 10/40G switch into place, I decided to leverage the two 10G ports already on my opnsense firewall. I dedicated one of the 10G ports to my TrueNAS server, and I dedicated the other 10G port to my bedroom/office.

While, this is far less then the 40G of dedicated bandwidth my server had before, I don’t imagine this should be a bottleneck very often… if ever. One of these days, I still plan on running a dedicated 40G link just for iSCSI.

Initial Benchmarks

So, after moving everything over, and setting up a new network for my office on opnsense, I decided to run benchmarks to determine the level of performance…. Since, my traffic now has to be routed, instead of switched.

Initial Network Benchmark

I have grown to enjoy using openspeedtest for doing quick, simple network benchmarks.

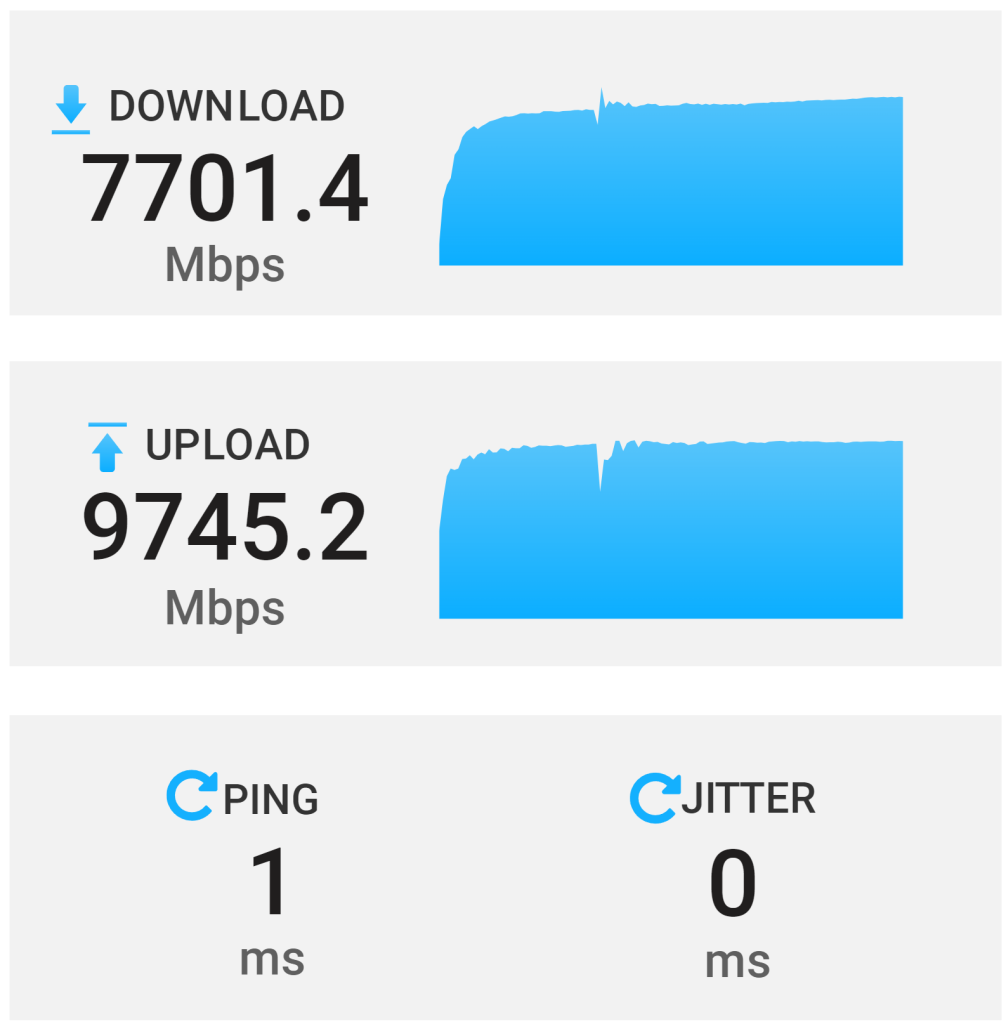

Without doing any configuration, the network benchmark isn’t horrible. I am getting 70%-80% of the expected download, with nearly 100% of the expected upload.

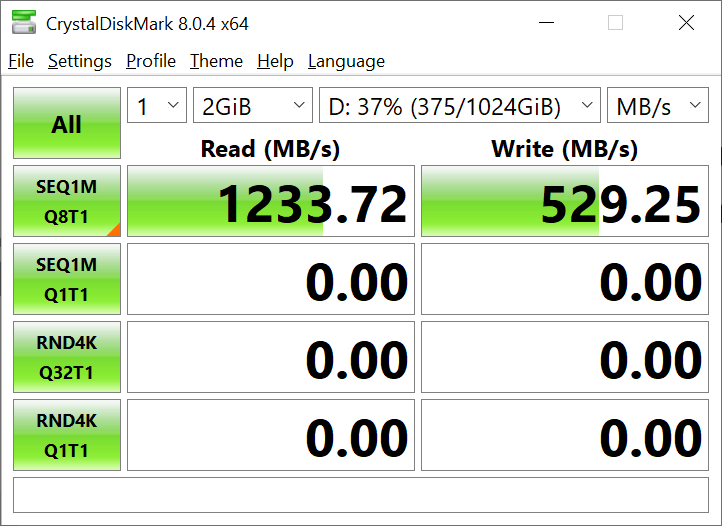

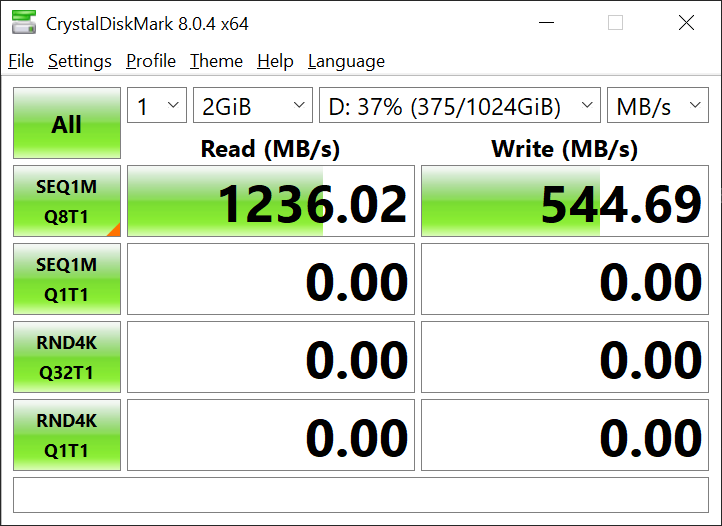

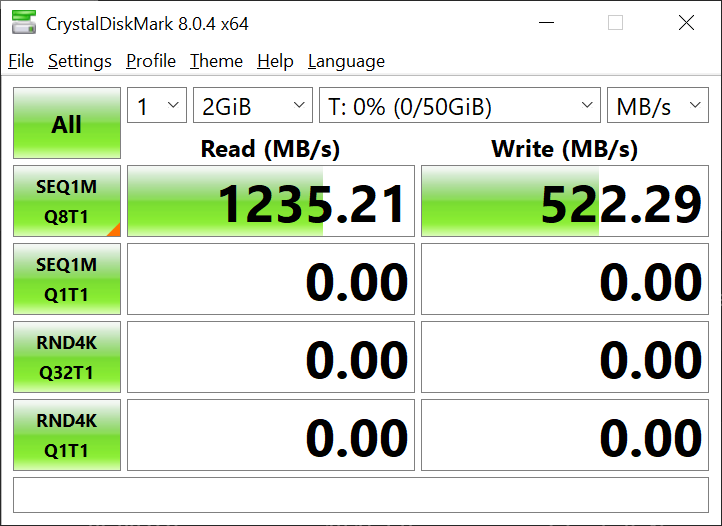

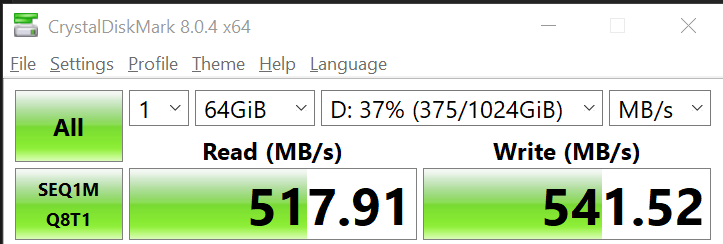

Initial ISCSI benchmark

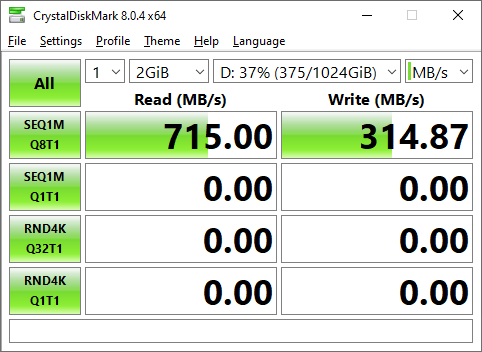

Around 1,200MB/s is the maximum speed obtainable over a 10G connection. However, I am getting far below what is possible. The write speed is literally a quarter of what the network is capable of.

While, my main ISCSI mount is only a 8-disk Z2 array, these results are… quite low.

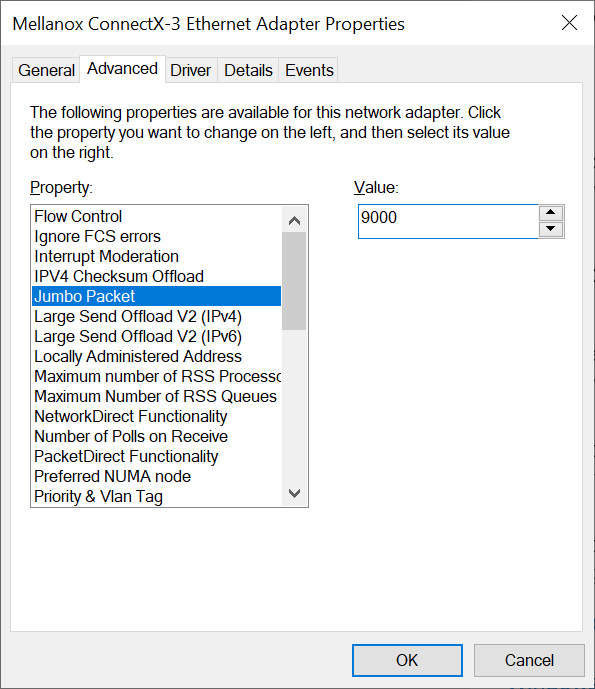

Adjust MTU

During the process of moving around networks, I did finally adjust the maximum MTU on my server to 9,000. It was set at 1,500 before due to a mixture of devices on LAN segment which did not support jumbo frames. However, since my bedroom/office now has a dedicated lan segment, I am able to fully leverage jumbo frames without additional impact.

So, 9,000 MTU, here I come.

After adjusting the MTU size, the first test was to ensure the jumbo packets are able to successfully navigate to my NAS without fragmenting. This can be accomplished with a simple ping comment, and the do-not-fragment flag set.

XtremeOwnage:ping 10.100.4.24 -f -l 8955

Pinging 10.100.4.24 with 8955 bytes of data:

Reply from 10.100.4.24: bytes=8955 time<1ms TTL=63

Reply from 10.100.4.24: bytes=8955 time<1ms TTL=63

Reply from 10.100.4.24: bytes=8955 time<1ms TTL=63

Ping statistics for 10.100.4.24:

Packets: Sent = 3, Received = 3, Lost = 0 (0% loss),

Approximate round trip times in milli-seconds:

Minimum = 0ms, Maximum = 0ms, Average = 0ms

Control-C

^C

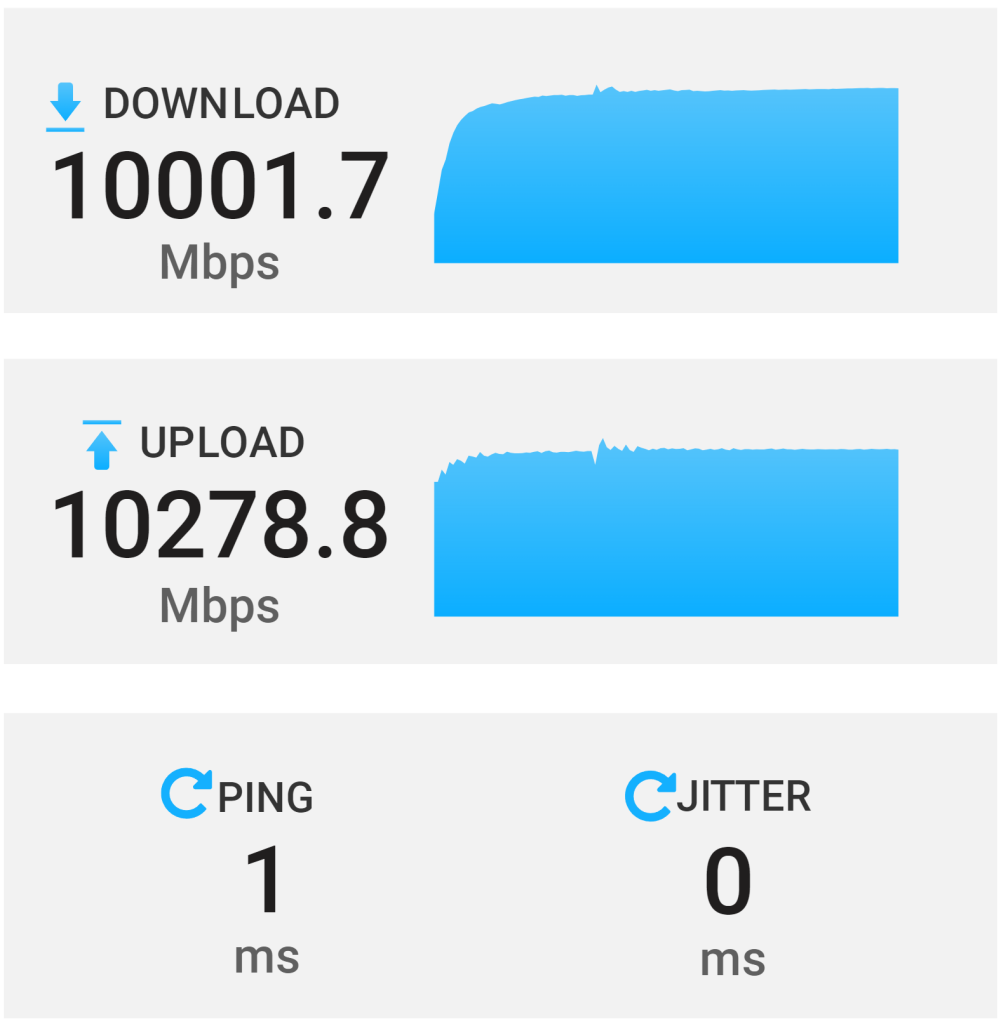

Network test with Jumbo Frames

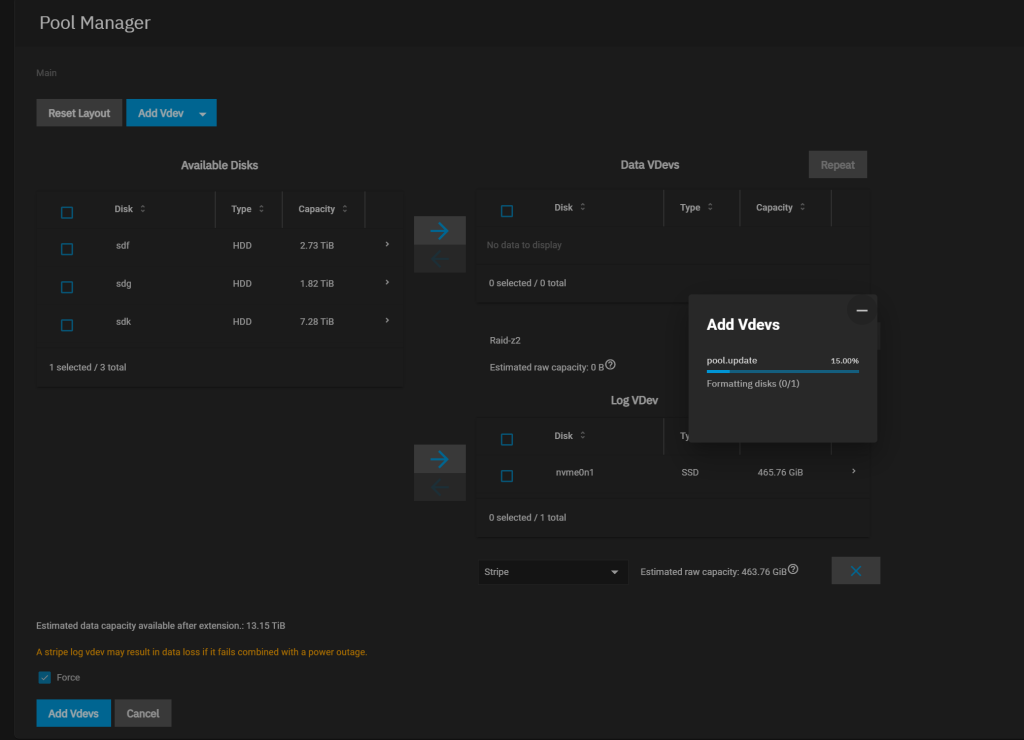

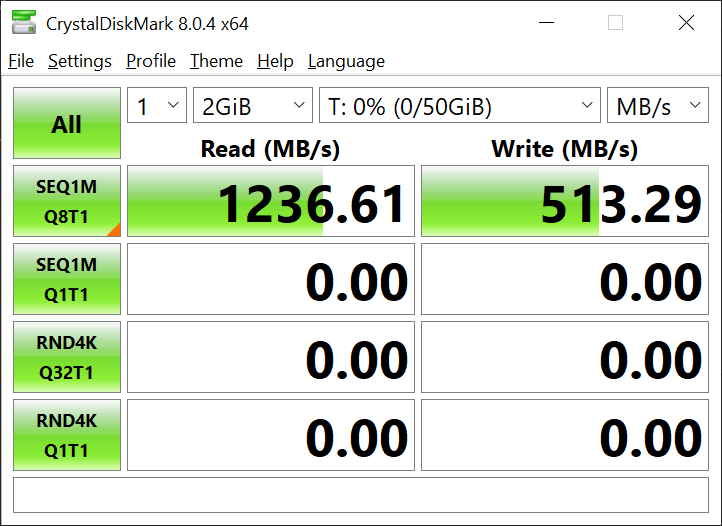

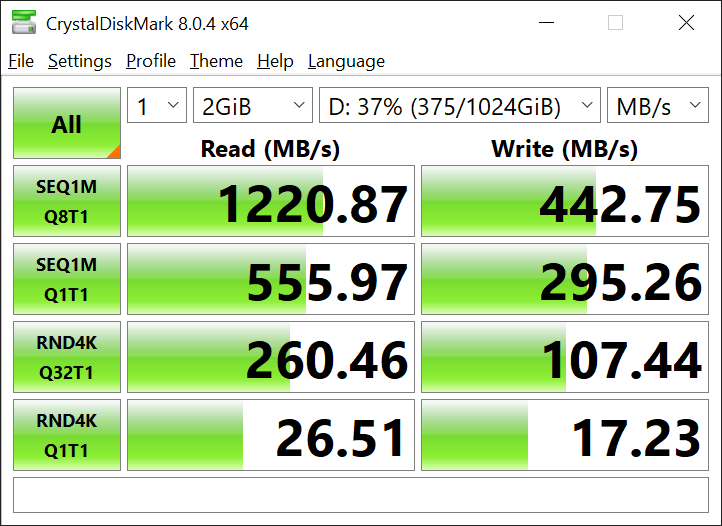

ISCSI Benchmark after Jumbo Frames

MUCH better! I am at least getting full read speeds now.

My write speeds, are actually likely correct, given this is a single 8-disk Z2 Array, without SLOG enabled. As well, this is not a clean room test. My array is running its normal production load. If you are reading this website, it is hosted on my server, and the same storage I am benchmarking right now.

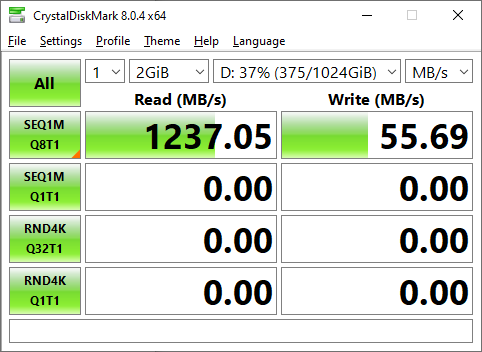

Will SLOG Help Performance?

Lets find out!

Sometime in the last month or two, I replaced my boot pool with a pair of 600G 15k SAS drives. This freed up a extra NVMe drive in my pool.

I do not at ALL recommend only using a single drive, ESPECIALLY, a consumer-grade SSD for your SLOG. If this device fails, you will have potential data corruption issues!!!

I am only going to use it for the duration of this test.

First, make sure you are forcing sync writes. You will want to set Sync=Always for the ZVOL you are testing with. (Or, your entire array if you plan on keeping an SLOG device.) However- I am only interested in testing to see if there is a noticeable performance difference.

I decided it may be useful to perform another benchmark at this time, with the only change being to force sync=always.

As I stated before, it is extremely recommended to never use a single device for your SLOG. I had to ignore the errors and force the configuration.

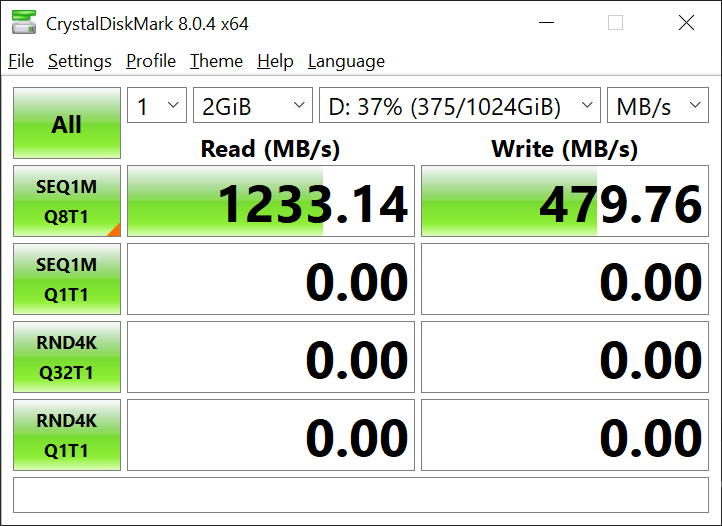

Post SLOG Benchmark

Since, the SLOG device is now apart of my pool, lets once again run a benchmark to see how performance is.

Well, performance is better then it was with sync=always, but, less then it was before we touched it.

Lets… remove sync=always, and see what the outcome is.

This backs up my previous opinion that a SLOG is useful situationally. At this point, I went ahead and removed the SLOG device from the pool.

To note, this is not a perfectly fair test of a SLOG. A 970 evo, while a fantastic NVMe for your home PC, is NOT a suitable device for a SLOG. Ideally, a Intel Optane 900P would be leveraged. These are much more suitable due to vastly improved durability, and reduced latency.

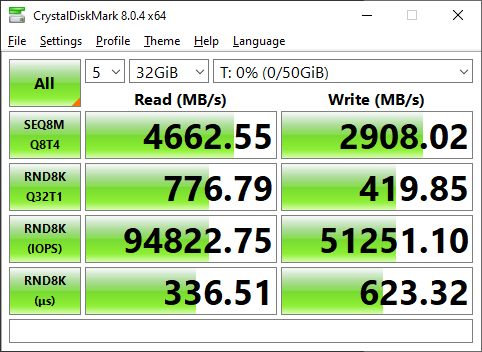

Testing Against NVMe.

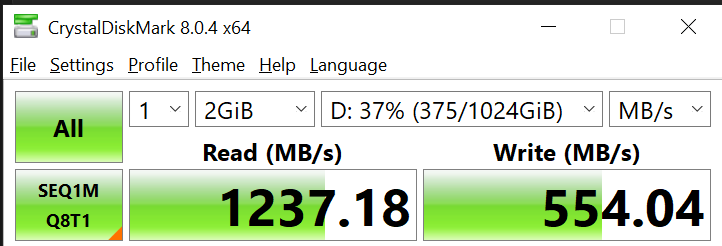

Just as a final test to ensure I am getting the expected ISCSI throughput being routed through my firewall…. I went ahead and setup a 50G iSCSI mount on my mirrored NVMe array.

Interesting. The write speeds are still half of what I expected.

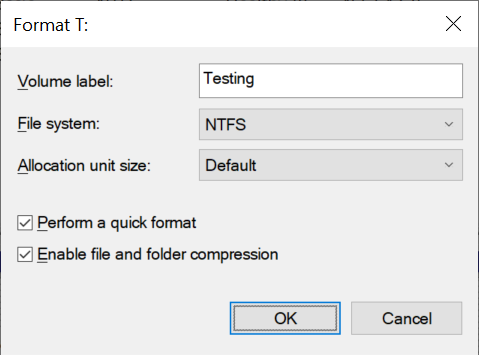

Enabling File/Folder Compression on Windows

I assumed perhaps windows File/Folder compression may have a difference. So, I preformatted the drive and enabled compression.

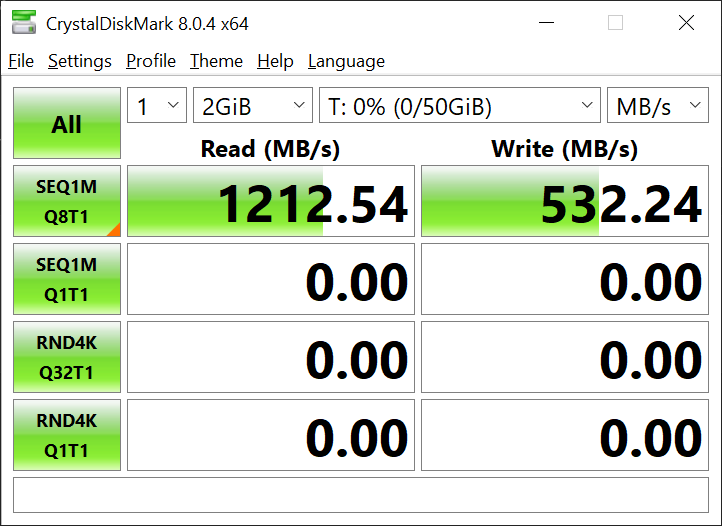

However, this actually reduced performance. Next, I went to TrueNAS, and disabled compression there. This was as simple as editing the zvol, and setting compression to none. After doing this, I also re-formatted the drive from the windows box, and disabled File/Folder Compression.

Read speeds are back to normal. However, no improvements on write speeds.

Final iSCSI results.

At this point, I decided to call it quits for now.

Worst-case scenario, the sequential read on my 8-Disk Z2 array is still twice as fast as a SATA SSD, and achieving the same read speeds. I decided to perform a full benchmark to determine the random speed of my spinning array as well.

Compare this to the budget gaming PC I built a while back, which used SATA SSDs.

Benchmark Size Disclaimer

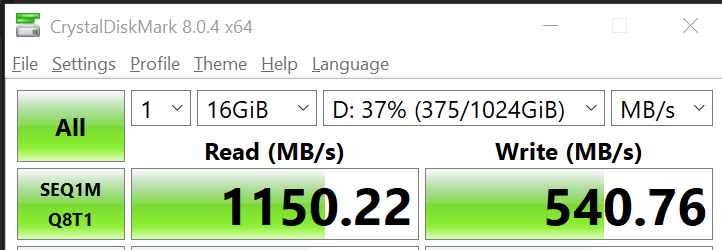

I personally ran most of the below benchmarks at 2G size. Because, I personally do not care to wait 10/20/30 minutes for benchmarks to complete. To demonstrate the difference in benchmark results with various sample sizes…. I performed various benchmarks at increasing sizes.

Here are benchmarks performed at the end of my testing, of various sizes, against my 8-disk Z2 array.

As noted in the above benchmarks, write speed remains extremely consistent. Read speed goes down as sample size is increased. To note, my server has 128G of ram. For real world use-cases, there isn’t a lot of content that will not be fully cached in ram.

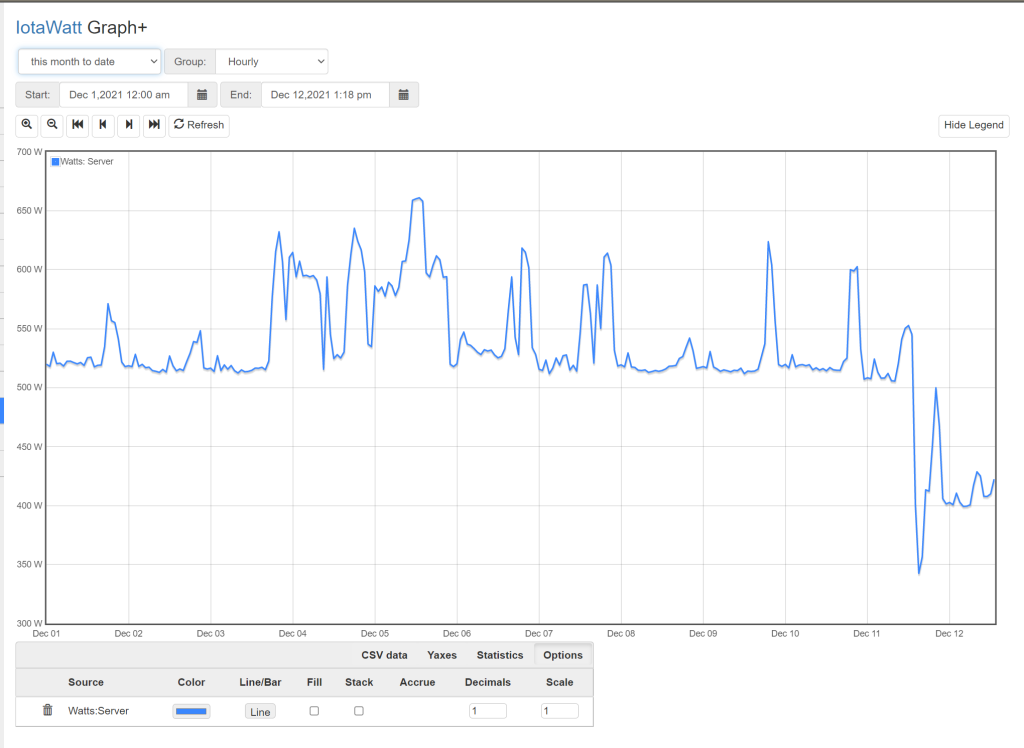

Power Consumption Differences.

Using Iotawatt, which tracks the power consumption of many of the various circuits in my home…. I am able to see the exact difference in power consumption used by my server rack.

Based on the data provided, It is safe to say, my rack is consuming on average, 100-150 watts less then it did before.

510-520watts was the average minimum consumption before the change. After the change, 400 watts appears to be the new minimum.

While, the total reduction was not AS much as I would have hoped,

(100 watts * 24 hours * 365 days) = 876kwh of consumption per year.

876kwh * $0.08c/kwh = 70$ less spent on electricity per year. Or… around 6$ a month total savings.

To note, this does NOT include how much it costs to remove the extra heat from the house. That number should be around 25% of the total cost. So, I will ballpark and say, replacing the brocade with my preexisting zyxel saved me 100$ a year.

I could have potentially reduced that number more by using smaller, more efficient switches. However, my unifi switch does not have enough POE ports to satisfy my POE needs.

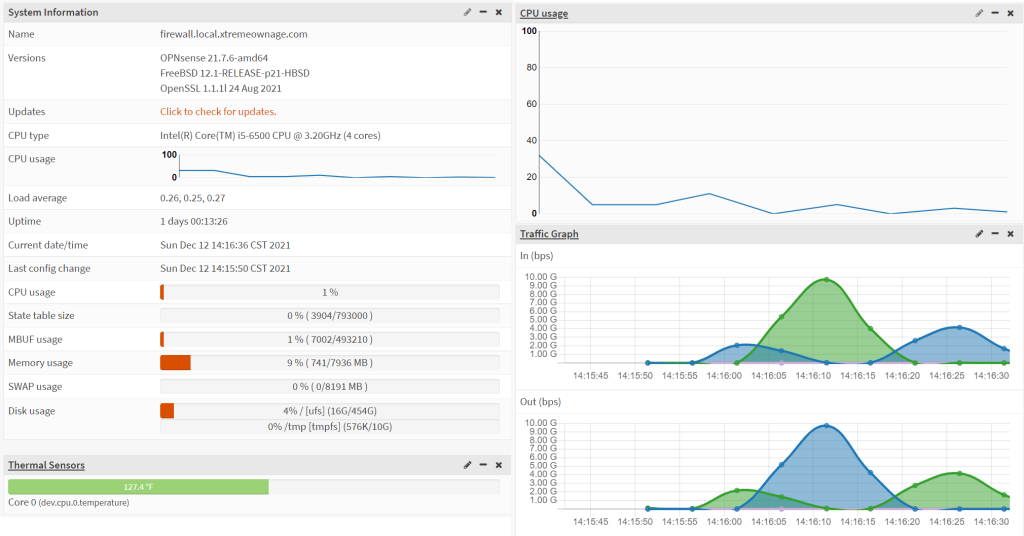

Firewall Impact

Since, all of my 10G traffic is now being routed through the firewall, and passing through ACLs, it was also important to document the impact to the firewall’s performance.

So, I captured the dashboard while performing a iSCSI test. The first large spike was the READ test, followed by the WRITE test. As noted, the firewall’s CPU utilization remains…. basically idle.

I believe it is safe to say, my firewall is hardly affected by this large volume of traffic.

Closing Notes

I was able to successfully reduce my yearly electric bill by nearly 100$.

I actually IMPROVED my 10G performance with this network change. As the network was setup before, I was not able to safely increase the MTU size, without causing impact to other devices not capable of handling the increased MTU size.

I do plan on investing further to attempt to determine why my iSCSI write speeds are lower than expected as well. However, for now, It is faster then it was before.

You need to show a custom message to blocked users. “Due to security reasons or what ever the reason you are blocked! ”

I wasted my time checking plugins, network etc. 🙂

Well, technically- you shouldn’t even be able to see the site! The GEOIP block is performed at cloudflare, long before it hits the site!

My understanding, if you fall into one of the blacklisted countries…. it would automatically display a message relevant to the reason.

Blocking whole country is always a bad idea.

Even if they are not your target Audience.

You can use Fail2ban or CFS

then Secure all wp dir with proper permission

maybe install wordfence

If your site is getting more attacks go behind cloudflare (Which i don’t like personally)

At the end it’s your site, you can decide who need to view it .. 🙂

I think you are facing an issue like this

https://wordpress.org/support/topic/remote-server-returned-an-unexpected-status-code-400/

I am from India and non of your sites post images are loading.

That would explain it-

I have a lot of countries goip blocked, especially many non-native English speaking countries.

India, China, Belarus, Russia are the main 4 I remember blocking after repeated spam bots and vuln scanners.

But, it is interesting you can see the text, but, not the images….

No, Still getting “We cannot complete this request, remote server returned an unexpected status code (400)” All images Those images

I try different connection.

i try google translate

i try EU web proxy.

Everywhere post images are not loading

Also try safari , chrome etc.

Incognito window also the same.

I think Your site have some configuration issue

Images are not loading

Seems fine?

I just tested over two seperate internet connections from both mobile as well as pc.