Well, I have been wanting to get infiniband working for a while. I decided today was as good of a time as any…. So, I made it happen.

Summary

- Was able to get infiniband working

- IPoIB performance is utter garbage.

- I didn’t want to hack up TrueNAS more to support RDMA

- Gave up, went back to my Chelsio 40GBe adaptor.

Step 1. Install a few packages.

By default, TrueNAS Scale has apt “disabled”. Please see THIS POST on how to re-enable it.

Once you have… re-enabled the ability to install packages, AND added the debian repos, we need to install a few packages to assist.

At this point, you should be able to run ibstat and get a status. If you don’t have a status, make sure your card is installed. I am using a ConnectX-3 (Non-pro)

To note, I have one port set as infiniband, and the other port set as ethernet.

root@truenas:~# ibstat

CA 'mlx4_0'

CA type: MT4099

Number of ports: 2

Firmware version: 2.42.5000

Hardware version: 1

Node GUID: 0x506b4b03007bfc50

System image GUID: 0x506b4b03007bfc53

Port 1:

State: Initializing

Physical state: LinkUp

Rate: 40

Base lid: 0

LMC: 0

SM lid: 0

Capability mask: 0x02514868

Port GUID: 0x506b4b03007bfc51

Link layer: InfiniBand

Port 2:

State: Down

Physical state: Disabled

Rate: 10

Base lid: 0

LMC: 0

SM lid: 0

Capability mask: 0x00010000

Port GUID: 0x526b4bfffe7bfc52

Link layer: Ethernet

How to change configuration parameters

# First- get the PCI address of your card.

root@truenas:~# lspci | grep Mell

03:00.0 Network controller: Mellanox Technologies MT27500 Family [ConnectX-3]

# In this case- 03:00.0 is my address.

# Show current configuration

root@truenas:~# mstconfig -d 03:00.0 query

Device #1:

----------

Device type: ConnectX3

Device: 03:00.0

Configurations: Next Boot

SRIOV_EN True(1)

NUM_OF_VFS 8

LINK_TYPE_P1 IB(1)

LINK_TYPE_P2 ETH(2)

LOG_BAR_SIZE 3

BOOT_PKEY_P1 0

BOOT_PKEY_P2 0

BOOT_OPTION_ROM_EN_P1 False(0)

BOOT_VLAN_EN_P1 False(0)

BOOT_RETRY_CNT_P1 0

LEGACY_BOOT_PROTOCOL_P1 PXE(1)

BOOT_VLAN_P1 1

BOOT_OPTION_ROM_EN_P2 False(0)

BOOT_VLAN_EN_P2 False(0)

BOOT_RETRY_CNT_P2 0

LEGACY_BOOT_PROTOCOL_P2 PXE(1)

BOOT_VLAN_P2 1

IP_VER_P1 IPv4(0)

IP_VER_P2 IPv4(0)

CQ_TIMESTAMP True(1)

# Set port mode to infiniband

mstconfig -d 03:00.0 set LINK_TYPE_P1=1

# Set port mode to ethernet

mstconfig -d 03:00.0 set LINK_TYPE_P1=2

# You can also set multiple properties in the same command

mstconfig -d 03:00.0 set LINK_TYPE_P1=1 LINK_TYPE_P2=1

# Disable boot rom, if you desire. I don't have plans on using it.

mstconfig -d 03:00.0 set LINK_TYPE_P1=1 BOOT_OPTION_ROM_EN_P1=0 BOOT_OPTION_ROM_EN_P2=0

Step 2. Ping across the network

This guide ASSUMES you already have a working physical link established between two infiniband cards.

In my case, I have a windows client, and a TrueNAS server.

Next, lets lists connected hosts

root@truenas:~# ibhosts ibwarn: [2618545] get_abi_version: can't read ABI version from /sys/class/infiniband_mad/abi_version (No such file or directory): is ib_umad module loaded? ibwarn: [2618545] mad_rpc_open_port: can't open UMAD port ((null):0) ../libibnetdisc/ibnetdisc.c:802; can't open MAD port ((null):0) /usr/sbin/ibnetdiscover: iberror: failed: discover failed

Seems… a kernel module is missing. Lets add it, and try again.

root@truenas:~# modprobe ib_umad # I also went ahead and loaded the infiniband IP over InfiniBand module. root@truenas:~# modprobe ib_ipoib root@truenas:~# ibhosts Ca : 0x0002c90300a29060 ports 2 "XTREMEOWNAGE-PC" Ca : 0x506b4b03007bfc50 ports 2 "MT25408 ConnectX Mellanox Technologies"

Well, look at that. The two PCs can see each other over infiniband.

The next step, was to allocate an ip address for the infiniband adapter.

ibp3s0: flags=4098<BROADCAST,MULTICAST> mtu 4092

unspec 80-00-02-28-FE-80-00-00-00-00-00-00-00-00-00-00 txqueuelen 256 (UNSPEC)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

As we can see, it doesn’t have an address. I provisioned the IP address through the TrueNAS WebGUI.

After saving the changes, applying, etc…. I was able to ping the local IP, but, not the remote IP.

root@truenas:~# ping 10.100.255.1 PING 10.100.255.1 (10.100.255.1) 56(84) bytes of data. 64 bytes from 10.100.255.1: icmp_seq=1 ttl=64 time=0.052 ms ^C --- 10.100.255.1 ping statistics --- 1 packets transmitted, 1 received, 0% packet loss, time 0ms rtt min/avg/max/mdev = 0.052/0.052/0.052/0.000 ms root@truenas:~# ping 10.100.255.2 PING 10.100.255.2 (10.100.255.2) 56(84) bytes of data. ^C

Doing a bit of troubleshooting, I ran ibstat on both ends.

and, determined the link was stuck in status “Initializing”

After a bit of research, I determined a subnet manager needs to exist, for a point to point link.

So…

root@truenas:~# apt-get install opensm Reading package lists... Done Building dependency tree... Done Reading state information... Done The following NEW packages will be installed: opensm ...

At this point, I decided to do a reboot, to ensure all of the modules are being properly loaded at boot.

Or… to see if…. all of the above work has been reset.

After rebooting, all of the configuration and packages were still in place, However, the kernel modules were not loaded back in. So… I reloaded the modules manually, and added a config to load them at boot.

root@truenas:~# modprobe ib_ipoib ib_umad ib_uverbs rdma_ucm root@truenas:~# nano /etc/modules-load.d/infiniband.conf # File Contents: ib_umad ib_ipoib ib_uverbs rdma_ucm

The next step, was to get opensm up and running.

root@truenas[/etc/modules-load.d]# systemctl status opensm

● opensm.service - Starts the OpenSM InfiniBand fabric Subnet Managers

Loaded: loaded (/lib/systemd/system/opensm.service; enabled; vendor preset: enabled)

Active: inactive (dead)

Condition: start condition failed at Sat 2022-03-26 13:26:55 CDT; 8min ago

Docs: man:opensm(8)

Mar 26 13:26:55 truenas.local.xtremeownage.com systemd[1]: Condition check resulted in Starts the OpenSM InfiniBand fabric Subnet Managers being skipped.

## I assume, it failed to start due to the missing kernel modules. Lets start it.

root@truenas[/etc/modules-load.d]# systemctl start opensm

root@truenas[/etc/modules-load.d]# systemctl status opensm

● opensm.service - Starts the OpenSM InfiniBand fabric Subnet Managers

Loaded: loaded (/lib/systemd/system/opensm.service; enabled; vendor preset: enabled)

Active: active (exited) since Sat 2022-03-26 13:35:38 CDT; 1s ago

Docs: man:opensm(8)

Process: 296934 ExecCondition=/bin/sh -c if test "$PORTS" = NONE; then echo "opensm is disabled via PORTS=NONE."; exit 1; fi (code=exited, status=0/SUCCESS)

Process: 296935 ExecStart=/bin/sh -c if test "$PORTS" = ALL; then PORTS=$(/usr/sbin/ibstat -p); if test -z "$PORTS"; then echo "No InfiniBand ports found."; exi>

Main PID: 296935 (code=exited, status=0/SUCCESS)

Mar 26 13:35:38 truenas.local.xtremeownage.com systemd[1]: Starting Starts the OpenSM InfiniBand fabric Subnet Managers...

Mar 26 13:35:38 truenas.local.xtremeownage.com sh[296935]: Starting opensm on following ports: 0x506b4b03007bfc51

Mar 26 13:35:38 truenas.local.xtremeownage.com sh[296935]: 0x526b4bfffe7bfc52

Mar 26 13:35:38 truenas.local.xtremeownage.com systemd[1]: Finished Starts the OpenSM InfiniBand fabric Subnet Managers.

## Much better.

At this point, I checked the stats for the infiniband nics.

root@truenas[/etc/modules-load.d]# ibstat

CA 'mlx4_0'

CA type: MT4099

Number of ports: 2

Firmware version: 2.42.5000

Hardware version: 1

Node GUID: 0x506b4b03007bfc50

System image GUID: 0x506b4b03007bfc53

Port 1:

State: Active

Physical state: LinkUp

Rate: 40

Base lid: 1

LMC: 0

SM lid: 1

Capability mask: 0x0251486a

Port GUID: 0x506b4b03007bfc51

Link layer: InfiniBand

Port 2:

State: Down

Physical state: Disabled

Rate: 10

Base lid: 0

LMC: 0

SM lid: 0

Capability mask: 0x00010000

Port GUID: 0x526b4bfffe7bfc52

Link layer: Ethernet

## Boom, good to go on the truenas side.

BADPROMPT:ibstat

CA 'ibv_device0'

CA type:

Number of ports: 2

Firmware version: 2.42.5000

Hardware version: 0x0

Node GUID: 0x0002c90300a29060

System image GUID: 0x0002c90300a29063

Port 1:

State: Down

Physical state: Disabled

Rate: 1

Base lid: 0

LMC: 0

SM lid: 0

Capability mask: 0x80500000

Port GUID: 0x0202c9fffea29061

Link layer: Ethernet

Transport: RoCE v1.25

Port 2:

State: Active

Physical state: LinkUp

Rate: 40

Real rate: 32.00 (QDR)

Base lid: 2

LMC: 0

SM lid: 1

Capability mask: 0x90580000

Port GUID: 0x0002c90300a29062

Link layer: IB

Transport: IB

Excellent, the link is active on both sides!

Since, the module was not active when the machine was rebooted, the interface was down. I used iconfig to bring it up.

root@truenas[/etc/modules-load.d]# ifconfig ibp3s0 up

root@truenas[/etc/modules-load.d]# ifconfig ibp3s0

ibp3s0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 2044

inet 10.100.255.1 netmask 255.255.255.252 broadcast 10.100.255.3

inet6 fe80::526b:4b03:7b:fc51 prefixlen 64 scopeid 0x20<link>

unspec 80-00-02-28-FE-80-00-00-00-00-00-00-00-00-00-00 txqueuelen 256 (UNSPEC)

RX packets 2 bytes 212 (212.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 37 bytes 11759 (11.4 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

root@truenas[/etc/modules-load.d]# ping 10.100.255.1

PING 10.100.255.1 (10.100.255.1) 56(84) bytes of data.

64 bytes from 10.100.255.1: icmp_seq=1 ttl=64 time=0.056 ms

64 bytes from 10.100.255.1: icmp_seq=2 ttl=64 time=0.049 ms

^C

--- 10.100.255.1 ping statistics ---

2 packets transmitted, 2 received, 0% packet loss, time 1030ms

rtt min/avg/max/mdev = 0.049/0.052/0.056/0.003 ms

We can now ping over IP. Lets do a speed test.

## Since, TrueNAS doesn't ship with iperf2 (Does include iperf3, however, getting speed results above 10G isn't consistent using iperf3, due to its single-threaded nature.... ## So, I prefer iperf2 for testing over 10G. root@truenas[/etc/modules-load.d]# apt-get install iperf Reading package lists... Done Building dependency tree... Done Reading state information... Done The following NEW packages will be installed: iperf 0 upgraded, 1 newly installed, 0 to remove and 105 not upgraded. ... root@truenas[/etc/modules-load.d]# iperf -v iperf version 2.0.14a (2 October 2020) pthreads root@truenas[/etc/modules-load.d]# iperf -s ------------------------------------------------------------ Server listening on TCP port 5001 TCP window size: 128 KByte (default) ------------------------------------------------------------ ## on the windows side. BADPROMPT:iperf.exe -c 10.100.255.1 ------------------------------------------------------------ Client connecting to 10.100.255.1, TCP port 5001 TCP window size: 208 KByte (default) ------------------------------------------------------------ [ 3] local 10.100.255.2 port 23277 connected with 10.100.255.1 port 5001 [ ID] Interval Transfer Bandwidth [ 3] 0.0-10.0 sec 7.69 GBytes 6.60 Gbits/sec BADPROMPT:iperf.exe -c 10.100.255.1 -P 10 ------------------------------------------------------------ Client connecting to 10.100.255.1, TCP port 5001 TCP window size: 208 KByte (default) ------------------------------------------------------------ [ 12] local 10.100.255.2 port 23297 connected with 10.100.255.1 port 5001 [ 10] local 10.100.255.2 port 23295 connected with 10.100.255.1 port 5001 [ 11] local 10.100.255.2 port 23296 connected with 10.100.255.1 port 5001 [ 9] local 10.100.255.2 port 23294 connected with 10.100.255.1 port 5001 [ 7] local 10.100.255.2 port 23292 connected with 10.100.255.1 port 5001 [ 6] local 10.100.255.2 port 23291 connected with 10.100.255.1 port 5001 [ 8] local 10.100.255.2 port 23293 connected with 10.100.255.1 port 5001 [ 5] local 10.100.255.2 port 23290 connected with 10.100.255.1 port 5001 [ 4] local 10.100.255.2 port 23289 connected with 10.100.255.1 port 5001 [ 3] local 10.100.255.2 port 23288 connected with 10.100.255.1 port 5001 [ ID] Interval Transfer Bandwidth [ 12] 0.0-10.0 sec 1.07 GBytes 916 Mbits/sec [ 10] 0.0-10.0 sec 899 MBytes 753 Mbits/sec [ 6] 0.0-10.0 sec 1.01 GBytes 870 Mbits/sec [ 8] 0.0-10.0 sec 220 MBytes 185 Mbits/sec [ 5] 0.0-10.0 sec 561 MBytes 470 Mbits/sec [ 9] 0.0-10.1 sec 695 MBytes 578 Mbits/sec [ 11] 0.0-10.1 sec 674 MBytes 557 Mbits/sec [ 7] 0.0-10.2 sec 346 MBytes 285 Mbits/sec [ 4] 0.0-10.2 sec 253 MBytes 208 Mbits/sec [ 3] 0.0-10.2 sec 681 MBytes 560 Mbits/sec [SUM] 0.0-10.2 sec 6.31 GBytes 5.31 Gbits/sec

Well, that was extremely disappointing!

Troubleshooting poor performance

The first step- is always to check MTU. I found the server was configured for 8,000, however, my client only supported 4092 max.

root@truenas# ifconfig ibp3s0 mtu 4092 up

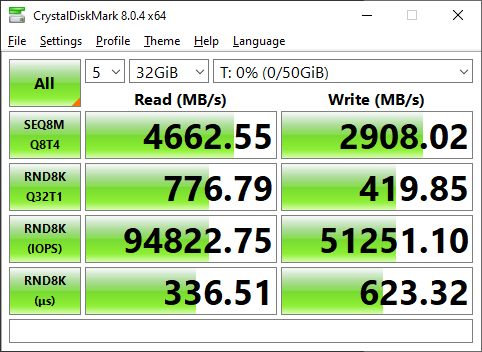

Just, to confirm this wasn’t an iperf issue, I decided to do a quick iscsi performance test.

Only, 5Gbits….. far short of the expected 40/56Gbits.

Well… lets doublecheck MTU.

root@truenas[/etc/modules-load.d]# ifconfig ibp3s0 mtu 4092

root@truenas[/etc/modules-load.d]# ifconfig ibp3s0

ibp3s0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 2044

inet 10.100.255.1 netmask 255.255.255.252 broadcast 10.100.255.3

inet6 fe80::526b:4b03:7b:fc51 prefixlen 64 scopeid 0x20<link>

unspec 80-00-02-28-FE-80-00-00-00-00-00-00-00-00-00-00 txqueuelen 256 (UNSPEC)

RX packets 23373377 bytes 47020734796 (43.7 GiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 6652816 bytes 6411049427 (5.9 GiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

## Appears the card on my server only supports 2044 MTU... this might explain the issues.

So… I set the MTU on both ends to 2044.

Sadly, this did not help performance.

Conclusion.

I DID successfully manage to get infiniband up and running.

I DID learn, IPoIB (IP over InfiniBand) is actually quite slow. To realize huge performance gains over using the NIC in ETH mode, you would need to leverage RDMA/RCOE.

Since, I have hacked up my TrueNAS install enough for the day, I decided to switch everything back to ethernet mode, and call it a day. I can easily get 40G out of ethernet mode.

How to switch back to ethernet mode.

If you recall earlier, I left one interface in ETH mode…. I am just going to switch the physical adaptor, and move the ip address over… so, I don’t need to reboot my PC.

ifconfig ibp3s0 0.0.0.0 0.0.0.0

ifconfig enp3s0d1 10.100.255.1/30 mtu 9000 up

root@truenas[/etc/modules-load.d]# ifconfig enp3s0d1

enp3s0d1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 9000

inet 10.100.255.1 netmask 255.255.255.252 broadcast 10.100.255.3

inet6 fe80::526b:4bff:fe7b:fc52 prefixlen 64 scopeid 0x20<link>

## On the windows side, I also physically swapped the adaptors over.

root@truenas[/etc/modules-load.d]# ping 10.100.255.2

PING 10.100.255.2 (10.100.255.2) 56(84) bytes of data.

64 bytes from 10.100.255.2: icmp_seq=1 ttl=128 time=0.482 ms

64 bytes from 10.100.255.2: icmp_seq=2 ttl=128 time=0.162 ms

## Connection is good to.... Lets test performance.

[SUM] 0.0000-10.0208 sec 10.7 GBytes 9.15 Gbits/sec

## Appears my client is only connected at 10G.... interesting.

root@truenas[/etc/modules-load.d]# ethtool enp3s0d1

Settings for enp3s0d1:

Supported ports: [ FIBRE ]

Supported link modes: 10000baseKX4/Full

40000baseCR4/Full

40000baseSR4/Full

56000baseCR4/Full

56000baseSR4/Full

1000baseX/Full

10000baseCR/Full

10000baseSR/Full

Supported pause frame use: Symmetric Receive-only

Supports auto-negotiation: Yes

Supported FEC modes: Not reported

Advertised link modes: 10000baseKX4/Full

40000baseCR4/Full

40000baseSR4/Full

1000baseX/Full

10000baseCR/Full

10000baseSR/Full

Advertised pause frame use: Symmetric

Advertised auto-negotiation: Yes

Advertised FEC modes: Not reported

Speed: 10000Mb/s

Duplex: Full

Auto-negotiation: off

Port: FIBRE

PHYAD: 0

Transceiver: internal

Supports Wake-on: d

Wake-on: d

Current message level: 0x00000014 (20)

link ifdown

Link detected: yes

## Wonder what happens if I uh, FORCE it to 40g.

root@truenas[/etc/modules-load.d]# ethtool -s enp3s0d1 speed 40000 duplex full autoneg off

## Turns out, the connection on the windows side dropped.... I am starting to think this particular ConnectX-3 does not support 40G.

## I know the adaptor on the server side supports 40G, because, its the same exact adaptor i used to benchmark 40G in a previous post!

I found documentation on my particular NIC here: https://downloads.dell.com/manuals/all-products/esuprt_ser_stor_net/esuprt_pedge_srvr_ethnt_nic/mellanox-adapters_users-guide_en-us.pdf

Based on the specifications, it “should” support 40G ethernet mode…

Edit- Turns out, it supported 40G infiniband, but, only 10GBe ethernet.

Yup. Oh well. Putting the chelsio NIC back in my PC. It had no problems doing 40G.